A field note from running customer security operations with AI agents.

For the past stretch of my career, a lot of what I called "security work" wasn't really security work. It was the work around the work. Pulling data out of systems that didn't want to give it up. Digging through vendor documentation to figure out which checkbox in which console controlled which behavior. Stitching together API calls. Shaping words into reports that someone, somewhere, would skim before approving.

That's the work around the thinking. And the actual security thinking, the part I trained twenty years for, was squeezed into whatever time was left.

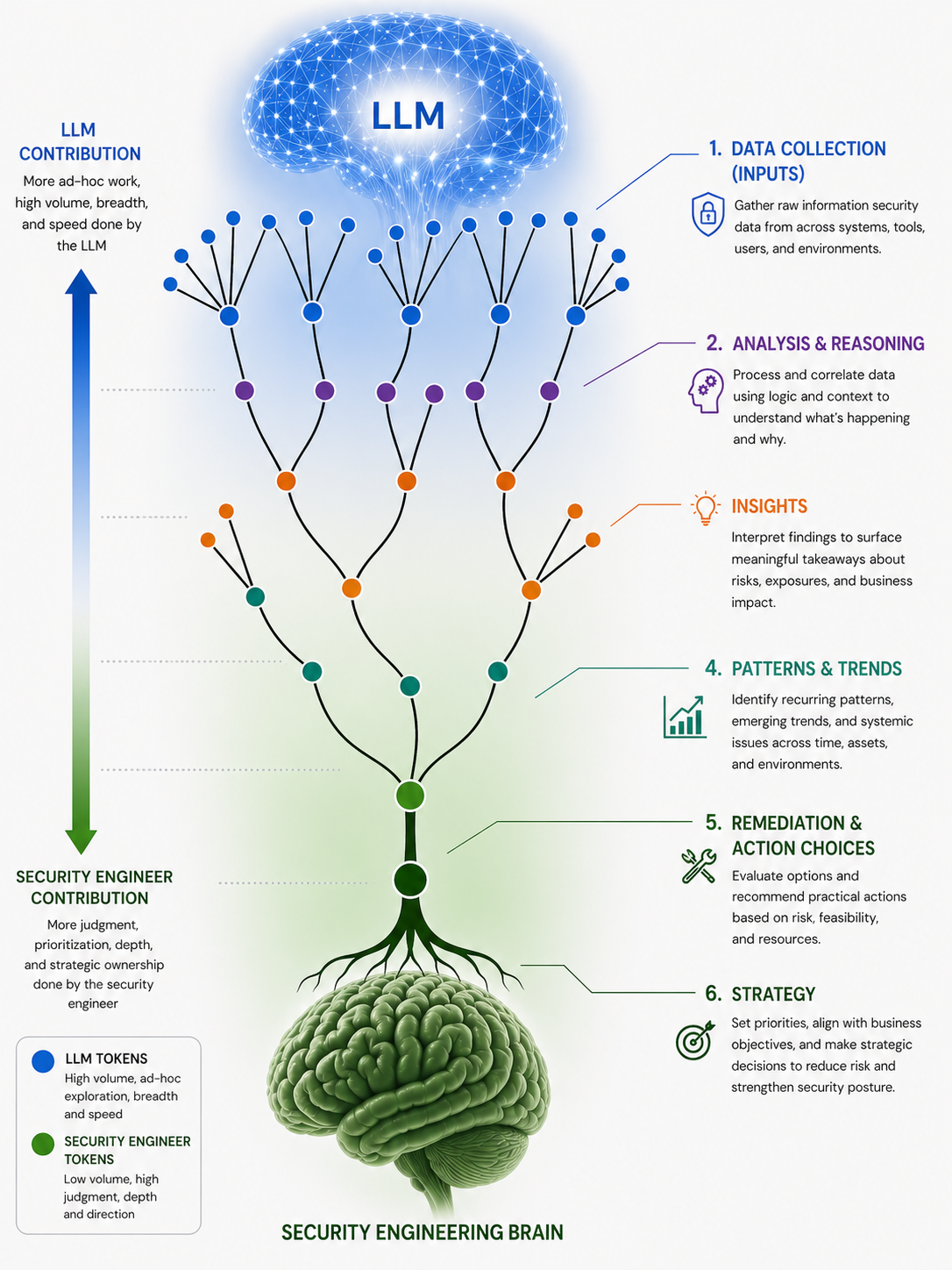

That ratio has flipped. And working through that flip, across detection and response, incident response, vulnerability management, compliance, and pen testing, has surfaced a pattern worth describing. We've started calling it the Security Engineering Brain.

The shape of the work today

Most engagements now follow the same arc. Data collection agents sit inside the customer's environment and pull together whatever a given task needs: logs, configurations, asset inventories, ticket history, identity data, scan results. They organize it. They reason over it. By the time a task lands on my desk, roughly 90% of the work is already done.

That 90% isn't a rough draft. It's a real attempt at the answer. The agent has triaged an alert and proposed a root cause. It has looked at a vulnerability backlog from ten different angles and surfaced the ten that matter most this week. It has drafted the compliance narrative, mapped controls to evidence, and flagged the gaps. It has made judgment calls, and it shows its reasoning.

My job is the remaining 10%. I read the agent's work, I ask the questions it didn't ask itself, and I approve, redirect, or reframe. Twenty years of pattern-matching across breaches, audits, and architectures lets me hold context the agent can't yet hold, the political shape of a customer's environment, the reason a particular control has been broken for three quarters, the tradeoff a CISO is quietly optimizing for. That's where my attention goes now.

It's worth saying plainly: this is the ratio I always wanted. The dream was never to do 30% of the thinking on top of 70% of the toil. The dream was to spend almost all of my time on the part of the job that actually required me.

Why the diagram looks the way it does

The illustration below is how we think about the division of labor.

The blue branches at the top are LLM tokens: high volume, ad-hoc, exploratory, broad, fast. The green roots at the bottom are security engineer tokens: low volume, high judgment, deep, directional. The work flows from raw signal at the canopy down to strategic posture at the root system, and the human contribution gets denser as you descend.

Here's how each layer is shifting:

- Data Collection. Fully commoditized. Agents gather raw security data from across systems, tools, users, and environments. There is no romance left in writing yet another collector script, and I don't miss it.

- Analysis & Reasoning. Largely commoditized. Correlating data, applying logic, holding the relevant context for a single incident or finding, modern agents do this well. The traditional core of security knowledge, how a technology works, how to write a detection rule, how to map a control to a piece of compliance text, is exactly the kind of accumulated knowledge LLMs absorb better than any human brain. I say this as someone whose career was partly built on accumulating that knowledge. It's fine. The knowledge was always supposed to be a means, not the end.

- Insights. Mostly the agent, with meaningful human contribution. The agents surface findings about risk, exposure, and business impact, and they're good at it, but not complete. Sometimes they miss the insight that matters most, the one that only makes sense if you've seen this customer's last three incidents. We're still developing the muscle of feeding those misses back into a security knowledge base that compounds. That feedback loop is one of the more interesting open problems in this work.

- Patterns & Trends. A genuine collaboration. Agents are excellent at spotting recurring patterns and emerging trends across time, assets, and environments. Humans are still better at deciding which of those patterns deserve organizational attention versus which are noise dressed up as signal.

- Remediation & Action Choices. Increasingly human. When you're choosing between three remediations, each with different resource costs, implementation friction, and risk reduction, the right answer depends on the customer's environment, their team's capacity, their culture, and what they tried last year that didn't work. The agent can lay out the options cleanly. Choosing between them is judgment, and judgment is where I want to spend my time.

- Strategy. Almost entirely human. Setting priorities, aligning security to business objectives, deciding what we're going to be good at over the next twelve to twenty-four months, this is the deepest part of the root system. It's where understanding a customer, the people, the politics, the appetite for change, dominates everything else.

Why this work has become joyful

I want to be direct about something that doesn't get said enough in security writing: this work is more joyful now.

Not because it's easier. The hard parts are still hard, and the stakes haven't dropped. It's joyful because the proportion of my day spent actually thinking about security and compliance, the work I came here to do, has gone way up. The proportion spent on the work around the thinking, fighting tools, parsing docs, formatting tables, writing the same kind of report for the eighth time, has gone way down.

Security knowledge has been commoditized. That sounds like it should be a threat to people like me. It isn't. It's a relief. The parts of the job that were valuable because they were tedious are no longer valuable, and the parts that were always supposed to be the point, judgment, prioritization, depth, strategic ownership, are finally getting the room they deserve.