Scoping and Segmentation for Cloud Infrastructure: PCI-DSS v4.0.1

Forward Deployed Engineer | Building AI-native cloud security at Transilience AI | PCI DSS, HITRUST, ISO 27001, SOC 2, PCI-SSS, PCI-3DS, HIPAA

Note: This guide uses AWS as the reference architecture for examples and diagrams. The segmentation principles, pen testing methodologies, and scope reduction strategies apply equally to Azure, GCP, and other cloud service providers.

Why Scope Is Your Biggest PCI Problem

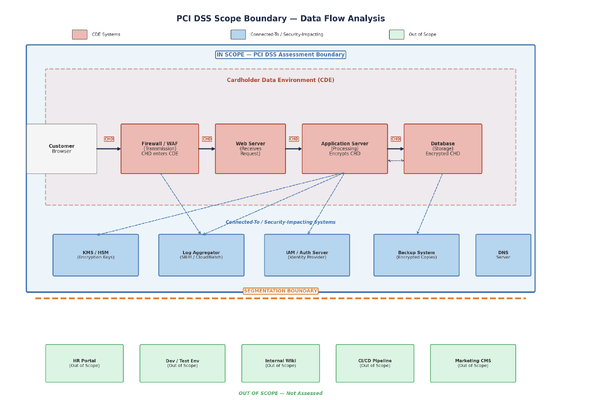

Under PCI DSS v4.0.1, scope comes down to one question: where does card data live, move, or get touched?

Any system that stores, processes, or transmits cardholder data is inside the boundary. So is any system with unrestricted connectivity to one that does. And anything that could affect the security of card data gets pulled in too, whether it handles a single PAN or not.

Cardholder Data (CHD) includes:

- Primary Account Number (PAN)

- Cardholder name

- Expiration date

- Service code

Sensitive Authentication Data (SAD) includes:

- Full track data, whether magnetic stripe or chip equivalent

- CAV2, CVC2, CVV2, or CID verification codes

- PINs and PIN blocks

SAD must never be stored after authorization, even if encrypted.

Together, these data elements define your cardholder data environment (CDE). Every system component, person, and process that touches CHD or SAD sits inside the CDE. Systems with unrestricted connectivity to those components get pulled into the boundary as well. Authentication servers, firewalls, DNS, log aggregators: if they could impact the security of that data, they are part of the assessment.

On a flat network, unrestricted connectivity means everything is in scope:

- Web servers

- Internal tools

- Developer workstations

- Legacy reporting systems

The standard is explicit that restricting account data to as few locations as possible may require reengineering long-standing business practices. The cost of not doing so is significant: more than 250 requirements and sub-requirements applied to every system, higher audit fees, larger attack surfaces, and slower engineering velocity.

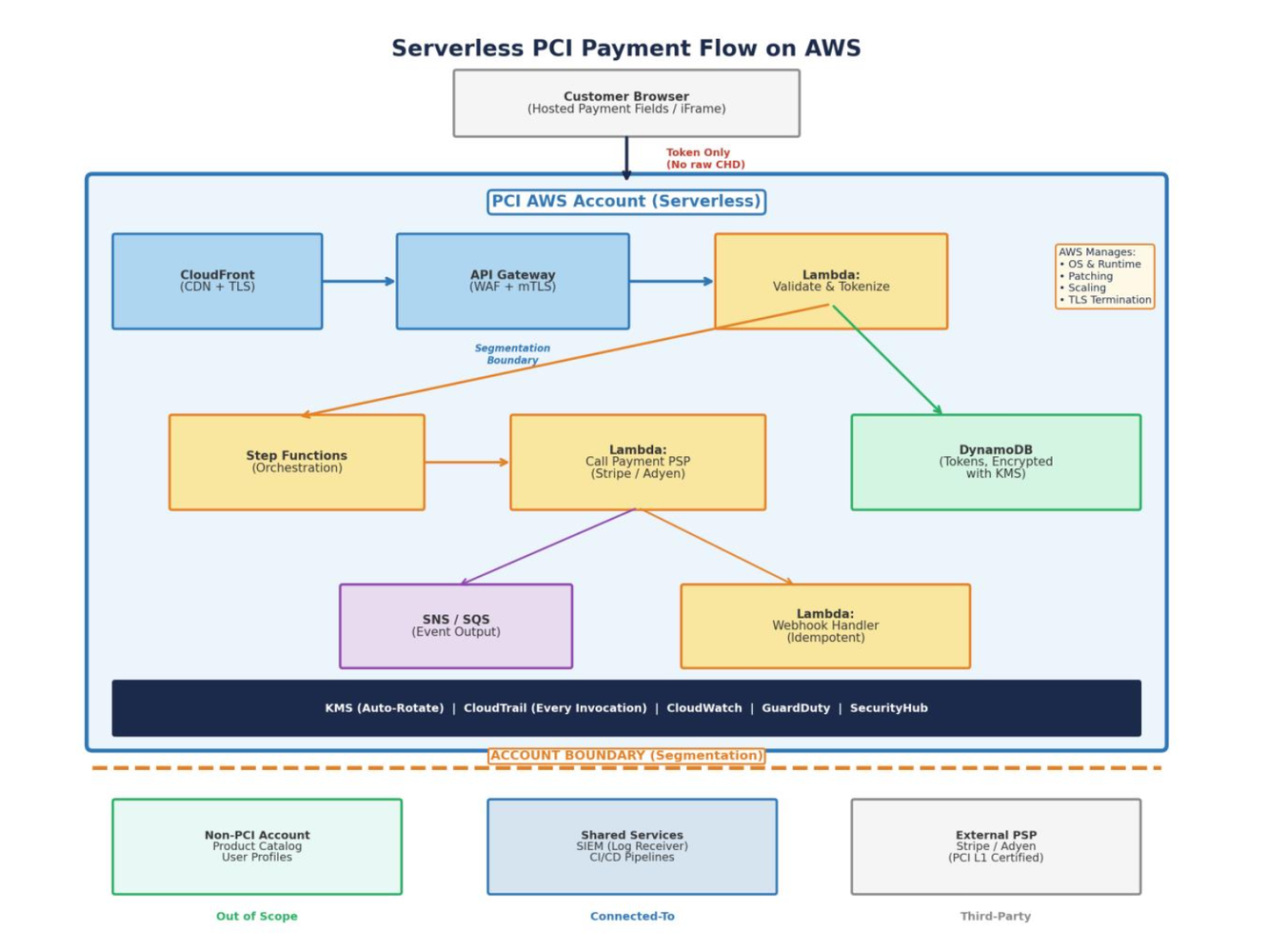

Determining Scope: Follow the Data

Scope determination is fundamentally a data-flow exercise. Trace how cardholder data enters, moves through, and exits your environment in three steps.

Step 1: Map Data Entry Points

Identify every channel through which CHD enters your organization:

- E-commerce checkout forms

- Point-of-sale terminals

- Call center agents

- Mobile applications

- Third-party integrations

Each is the start of a data flow line that must be traced end to end.

Step 2: Trace Data Flows

For each entry point, document how CHD moves across systems and networks, including:

- Processing

- Storage

- Transmission

- Eventual deletion

This mapping forms the foundation of your scope boundary.

Step 3: Categorize Systems by Exposure

With data flows mapped, classify every system according to how it interacts with CHD, SAD, or the security controls around them.

- Systems that directly store, process, or transmit CHD are in scope

- Systems with unrestricted connectivity to those assets are in scope

- Systems that can affect the security of CHD are in scope

- Only systems with demonstrably effective isolation can be left out

That last point is where most teams get into trouble. They identify the CDE correctly, but they overestimate the quality of their segmentation and underestimate the number of supporting systems that still influence CDE security.

Reducing Scope: The Core Strategies

Four approaches, often used in combination, shrink the PCI boundary.

1. Tokenization

Replace the PAN with a non-reversible token at the edge. Hosted payment fields mean raw card data never touches your infrastructure.

2. Network Segmentation

Isolate CDE systems using firewalls, security groups, or VLANs. Segmentation is recommended, not required, but without it your entire network is in scope. Effectiveness must be validated through segmentation pen testing under Requirement 11.4.5.

3. Outsourcing to PCI-Compliant Providers

Delegating payment processing to a validated third-party with an Attestation of Compliance (AOC) offloads CHD handling. You still retain responsibility for managing the relationship and verifying their compliance.

4. Point-to-Point Encryption (P2PE)

P2PE encrypts CHD at the point of interaction, with no decryption until the solution provider, removing your network from scope for many requirements.

In my experience, most teams stop here. They segment, they tokenize, they outsource, and they assume the job is done. But segmentation only counts if it holds under attack. The rest of this article focuses on how to build and validate that boundary in cloud infrastructure.

Implementing Segmentation in Cloud Infrastructure

A critical clarification first: segmentation of the CDE from the rest of your network is not itself a PCI DSS requirement. The standard says so explicitly. However, without it, the entire network is in scope.

Segmentation is strongly recommended because it reduces:

- Assessment scope

- Cost of compliance

- Risk of cardholder data compromise

Cloud environments give you segmentation tools that do not exist on-premises, but they also introduce attack surfaces that teams miss repeatedly. The shared responsibility model means the cloud service provider manages physical and hypervisor isolation. Everything above that, including VPC design, IAM policies, resource policies, and account boundaries, is yours to get right and yours to prove.

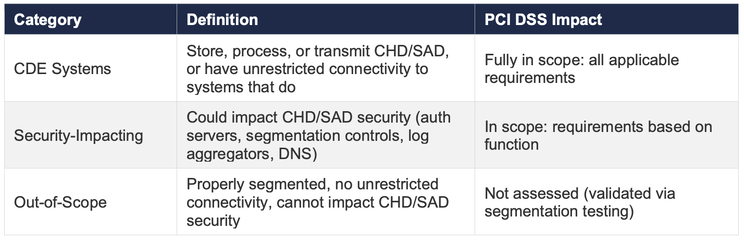

EC2 (Instance-Based): Segmentation Controls

When your CDE runs on EC2, segmentation is a layered network exercise. You are building walls at multiple levels, and each wall must be independently testable.

Layer 1: Account Isolation

The strongest segmentation boundary in AWS is the account boundary.

A dedicated PCI AWS account within an AWS Organization ensures that non-PCI workloads have no implicit network connectivity to CDE resources. IAM policies, service control policies (SCPs), and billing are all isolated. Even if an attacker compromises a non-PCI account, they face a hard boundary before reaching the CDE.

Layer 2: VPC and Subnet Architecture

Within the PCI account, the CDE runs in a dedicated VPC with:

- Private subnets for payment services and databases

- Public subnets limited to the load balancer

Security groups enforce port-level access:

- Only

443inbound to the ALB - Only the application port from ALB to EC2

- Only the database port from EC2 to RDS

NACLs provide a second layer of stateless filtering at the subnet boundary.

Layer 3: VPC Peering and Transit Gateway Controls

If the PCI VPC peers with other VPCs, for example for shared logging, route tables must be locked to only the required routes. Transit gateways must use route table associations that prevent non-PCI accounts from routing traffic into CDE subnets.

In my assessments, these configurations are the most common source of accidental scope expansion.

Layer 4: OS and Instance-Level Controls

Each EC2 instance in the CDE needs:

- A hardened AMI, ideally CIS-benchmarked

- File integrity monitoring

- Anti-malware

- Centralized logging agents

These customer-managed controls do not exist in serverless, and they are where I see teams burn the most audit preparation hours.

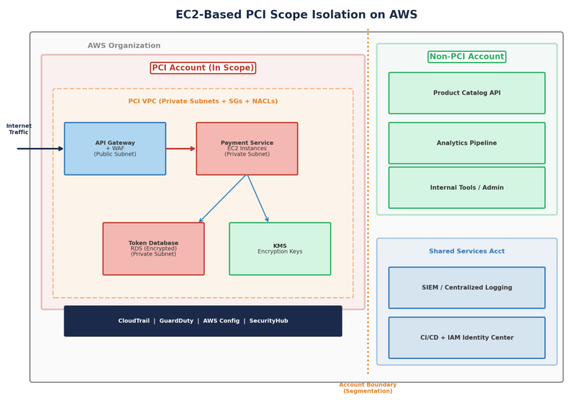

Serverless: Segmentation Controls

Serverless architectures eliminate the OS and much of the traditional network layer entirely. There are no security groups on a Lambda function, no NACLs on a DynamoDB table, and no iptables rules to configure. Segmentation shifts from network boundaries to identity and policy boundaries.

Layer 1: Account Isolation

Account-level isolation works identically to EC2. The PCI account contains only payment-related:

- Lambda functions

- API Gateway configurations

- DynamoDB tables

- KMS keys

Layer 2: API Gateway as a Segmentation Boundary

API Gateway is assessed as part of AWS's own PCI DSS Level 1 Service Provider assessment and acts as a segmentation boundary. It provides:

- Managed WAF integration

- Mutual TLS

- Request validation

- Throttling

Lambda functions never have inbound network listeners. Per the September 2024 guidance, these managed service interfaces serve as segmentation boundaries because AWS includes them in its own PCI scope.

Layer 3: IAM Roles and Resource Policies

In serverless, the equivalent of a security group is an IAM role.

Each Lambda function should run with a dedicated execution role granting only the minimum permissions required, such as:

- Write to one specific DynamoDB table

- Decrypt with one specific KMS key

Resource policies on DynamoDB, S3, SQS, and KMS provide a second boundary. Even if an attacker obtains credentials, the resource policy can still deny access at the resource level.

Layer 4: Ephemeral Compute and No Lateral Movement

Lambda functions are ephemeral: they spin up, execute, and terminate. There is no persistent OS, no SSH daemon, no open ports, and no filesystem to pivot from.

A compromised function cannot scan the network or install persistent backdoors. The blast radius is architecturally constrained to what that function's IAM role permits.

Proving It Works: Segmentation Pen Testing in Cloud

Segmentation without validation is just an assumption.

The PCI SSC Scoping and Segmentation Guidance for Modern Network Architectures from September 2024 is explicit: pen testing of segmentation controls should include tests between CSP management nodes and customer systems, and must consider architectural nuances including:

- Serverless components

- Container clusters

- Sidecars

- Subnets

- Routing tables

- Security groups

This is not traditional network pen testing. Cloud segmentation validation must cover:

- The control plane

- The identity layer

- Resource policies

Not just port scans.

The guidance calls out three testing scenarios that matter in practice.

Testing Scenario 1: Within and Across VPCs

Testing involves more than port scanning between in-scope and out-of-scope VPCs. It should assess whether VPCs can connect to one another, whether those connection points can be exploited, and whether changes to cloud configurations or attacks on the control plane could defeat segmentation.

Questions to test include:

- Can an attacker with access to a non-PCI VPC modify a route table to create a path into the PCI VPC?

- Can a VPC peering connection be exploited to reach CDE subnets?

Testing Scenario 2: Inter-Account Connectivity Within the Same CSP

Where multiple accounts exist within the same CSP, such as accounts inside one AWS Organization, testing should evaluate scope boundaries between those accounts.

The guidance is specific: testers should contemplate an attack against the control plane, such as the master account console, to change configuration, gain access, or create new resources that interact with CDE resources.

In practice, this means validating:

- Whether an attacker in a non-PCI account can assume a role in the PCI account

- Whether SCPs block cross-account actions correctly

- Whether shared services such as logging or CI/CD pipelines provide a bridge into the CDE

Testing Scenario 3: Across Multiple CSPs

Organizations spanning multiple CSPs must test:

- WAN connections

- Remote access

- Public network connections

- Linkages using combined identity and access management

The key question is simple: can segmentation be defeated through cross-CSP mechanisms? Joint identity or entitlement-granting processes often become the hidden path that reconnects environments teams believed were isolated.

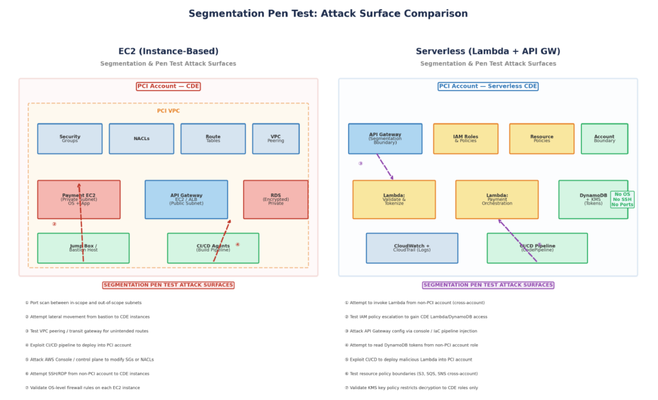

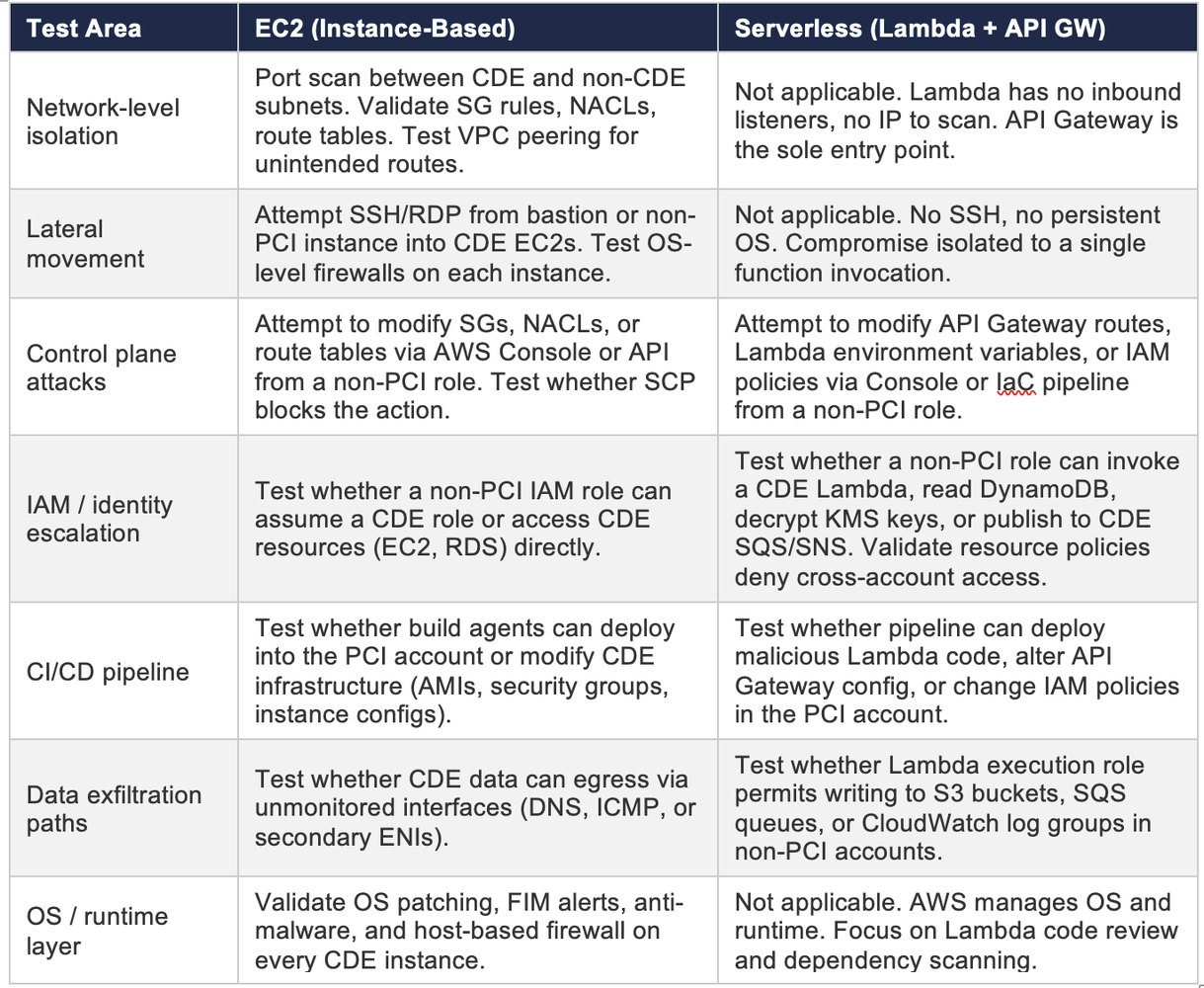

EC2 vs. Serverless: How the Attack Surface Changes

The attack surface looks fundamentally different between instance-based and serverless architectures.

What Testers Must Validate in EC2 Environments

In EC2-heavy environments, segmentation testing still looks familiar:

- Validate subnet boundaries

- Probe east-west network reachability

- Inspect route tables and transit gateway propagation

- Test security groups and NACL effectiveness

- Evaluate OS hardening and endpoint controls

- Check whether bastions, shared logging, or management tooling bridge into the CDE

What Testers Must Validate in Serverless Environments

In serverless environments, testing must shift:

- Validate IAM trust and permission boundaries

- Inspect API Gateway policies and WAF enforcement

- Test KMS key policy isolation

- Review resource policies across DynamoDB, S3, SQS, and eventing layers

- Check whether deployment pipelines or admin identities can create unintended access paths

- Validate that ephemeral compute cannot be used as a persistence or pivot layer

The net effect is that serverless often reduces classic lateral movement opportunities, but only if identity boundaries are disciplined. Weak IAM in serverless is the equivalent of a flat network in EC2.

Practical Takeaways

If you remember only a few things from this guide, make them these:

- PCI scope is not just where PAN lives. It includes everything that can affect the security of PAN.

- Segmentation is optional in theory but essential in practice if you want to keep your scope manageable.

- Data-flow mapping is the foundation of every scope decision.

- In EC2, segmentation is mostly a network architecture problem.

- In serverless, segmentation is mostly an identity and resource policy problem.

- Pen testing must validate the control plane and identity model, not just network reachability.

Most PCI programs get expensive because organizations accept inherited sprawl. The fastest route to lower scope is not a cleaner spreadsheet. It is a cleaner architecture.

References

- PCI DSS v4.0.1, Information Supplement: Guidance for PCI DSS Scoping and Network Segmentation

- PCI DSS Scoping and Segmentation Guidance for Modern Network Architectures, PCI SSC Information Supplement, September 2024

- Original LinkedIn article by Ratanshi Puri: Scoping and Segmentation for Cloud Infrastructure: PCI-DSS v4.0.1